H13-211 Huawei Certified ICT Associate-Intelligent Computing exam is available at PassQuestion. New Released H13-211 Training Material provided by PassQuestion would be the finest and most successful method to prepare for the HCIA-Intelligent Computing V1.0 H13-211 exam. Real H13-211 questions and answers are fully prepared for you to participate in the H13-211 HCIA-Intelligent Computing V1.0 exam.

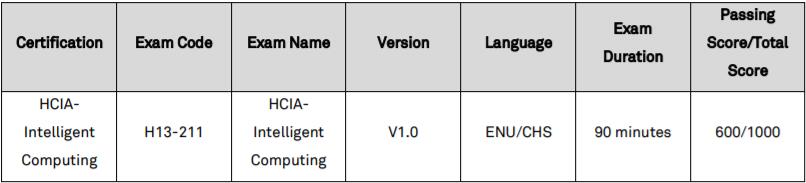

HCIA-Intelligent Computing V1.0 Certification Exam

The HCIA-Intelligent Computing V1.0 certification aims to enable engineers with basic knowledge and skills in the intelligent computing field, including computing industry basis, chip technology, computing system architecture and products etc.

The HCIA-Intelligent Computing V1.0 curriculum includes a brief history and development trend of the computing industry, an overview of the computing system architecture, a Computing platform and common technologies, as well as industry solution cases.

Passing the HCIA-Intelligent Computing V1.0 certification will indicate that you have the basic knowledge about the intelligent computing industry, software and hardware architecture of the computing system, common technologies of servers and chips and application practices in typical industry solutions. You will have the knowledge and skills required for intelligent computing pre-sales technical support, intelligent computing after-sales technical support, intelligent computing product sales, intelligent computing project management, server engineers and data center IT engineers.

Enterprises who employ HCIA-Intelligent Computing V1.0 certified engineers will be able to implement basic design, operation and maintenance of intelligent computing products and solutions to meet the challenges of the intelligent computing era.

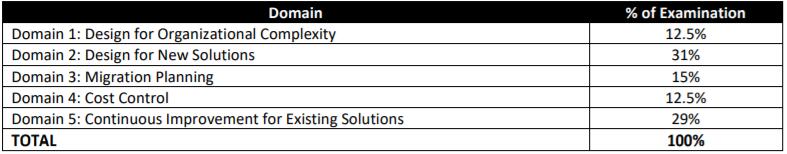

H13-211 Exam Content - HCIA-Intelligent Computing V1.0

The HCIA-Intelligent Computing exam includes: Brief History and Development Trend of the Computing Industry, Overview of the Computing System Architecture, Introduction to Computing Platform Products, Common Technologies and O&M of the Computing Platform and Industry Solution Cases.

1. Brief History and Development Trend of the Computing Industry 10%

Brief History and Development Trend of the Computing Industry

Classification and Features of Processor Chips

Definition and Characteristics of Heterogeneous Computing

Classification and Features of Processor Chips

Definition and Characteristics of Heterogeneous Computing

2. Overview of the Computing System Architecture 20%

Definition of the Computing System

Product type of computing system

Introduction to mainstream players in the computing system

Product type of computing system

Introduction to mainstream players in the computing system

3. Introduction to Computing Platform Products 30%

Server Type and Software and Hardware Structure

Key Components of the Server

Introduction to Computing Product Software

Key Components of the Server

Introduction to Computing Product Software

4. Common Technologies and O&M of the Computing Platform 30%

Computing platform HA technology (including cluster and stateless computing)

Technologies Related to Heterogeneous Computing and Intelligent Acceleration

Intelligent Operation & Maintenance of computing products (server management software and Ansible foundation)

Technologies Related to Heterogeneous Computing and Intelligent Acceleration

Intelligent Operation & Maintenance of computing products (server management software and Ansible foundation)

5. Industry Solution Cases 10%

HPC solution (including the TaiShan HPC solution)

Artificial intelligence and intelligent edge solution.

Artificial intelligence and intelligent edge solution.

View Online H13-211 Free Questions From PassQuestion HCIA-Intelligent Computing V1.0 H13-211 Training Material

1. The history of robots is not long. In 1959, the United States Ingeber and Dvor made the first generation of industrial robots in the world, and the history of robots really began.

According to the development process of the robot, it is usually divided into three generations, what are respectively? (Multiple choice)

A. Intelligent robot

B. Teaching reproducible robot

C. Robot with a sense

D. Thinking robot

Answer: ABC

According to the development process of the robot, it is usually divided into three generations, what are respectively? (Multiple choice)

A. Intelligent robot

B. Teaching reproducible robot

C. Robot with a sense

D. Thinking robot

Answer: ABC

2. What subject is artificial intelligence?

A. Mathematics and physiology

B. Psychology and physiology

C. Linguistics

D. Comprehensive cross-cutting and marginal disciplines

Answer: D

A. Mathematics and physiology

B. Psychology and physiology

C. Linguistics

D. Comprehensive cross-cutting and marginal disciplines

Answer: D

3. Description of artificial intelligence: Artificial intelligence is a new technical science that studies, develops the theories, methods and application systems for simulating, extending and expanding human intelligence, and is one of the core research areas of machine learning.

A. True

B. False

Answer: A

A. True

B. False

Answer: A

4. When was the first time to propose "artificial intelligence"?

A. 1946

B. 1960

C. 1916

D. 1956

Answer: D

A. 1946

B. 1960

C. 1916

D. 1956

Answer: D

5. Dark Blue "Human-Machine War" In May 1997, the final computer of the famous "Human-Machine War" defeated the world chess king Kasparov with a total score of 3.5 to 2.5. What is this computer called?

A. Navy

B. Dark green

C. Thinking

D. Blue sky

Answer: A

A. Navy

B. Dark green

C. Thinking

D. Blue sky

Answer: A

6. In which year did Huawei formally provide services as a cloud service, and cooperate with more partners to provide richer artificial intelligence practices?

A. 2002

B. 2013

C. 2015

D. 2017

Answer: D

A. 2002

B. 2013

C. 2015

D. 2017

Answer: D